Platform

Unitree Go1 + Gazebo

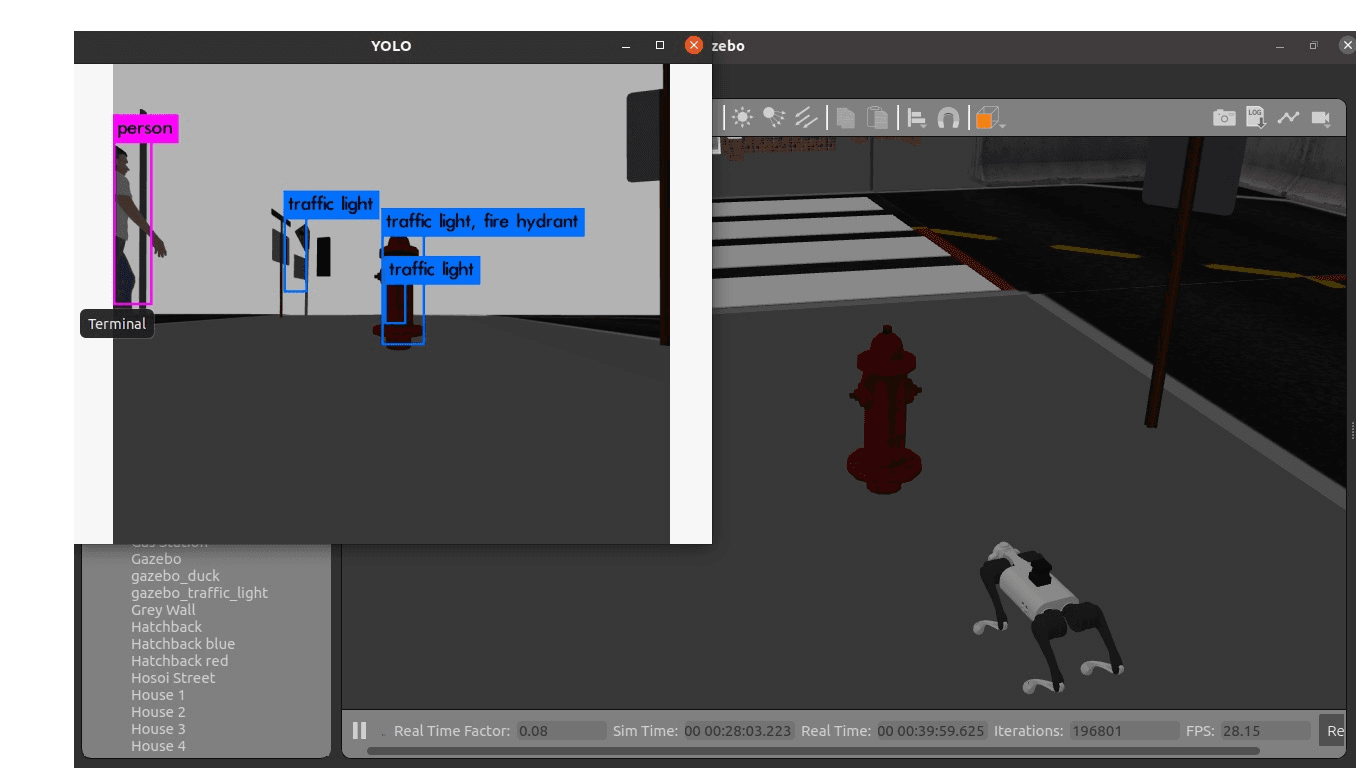

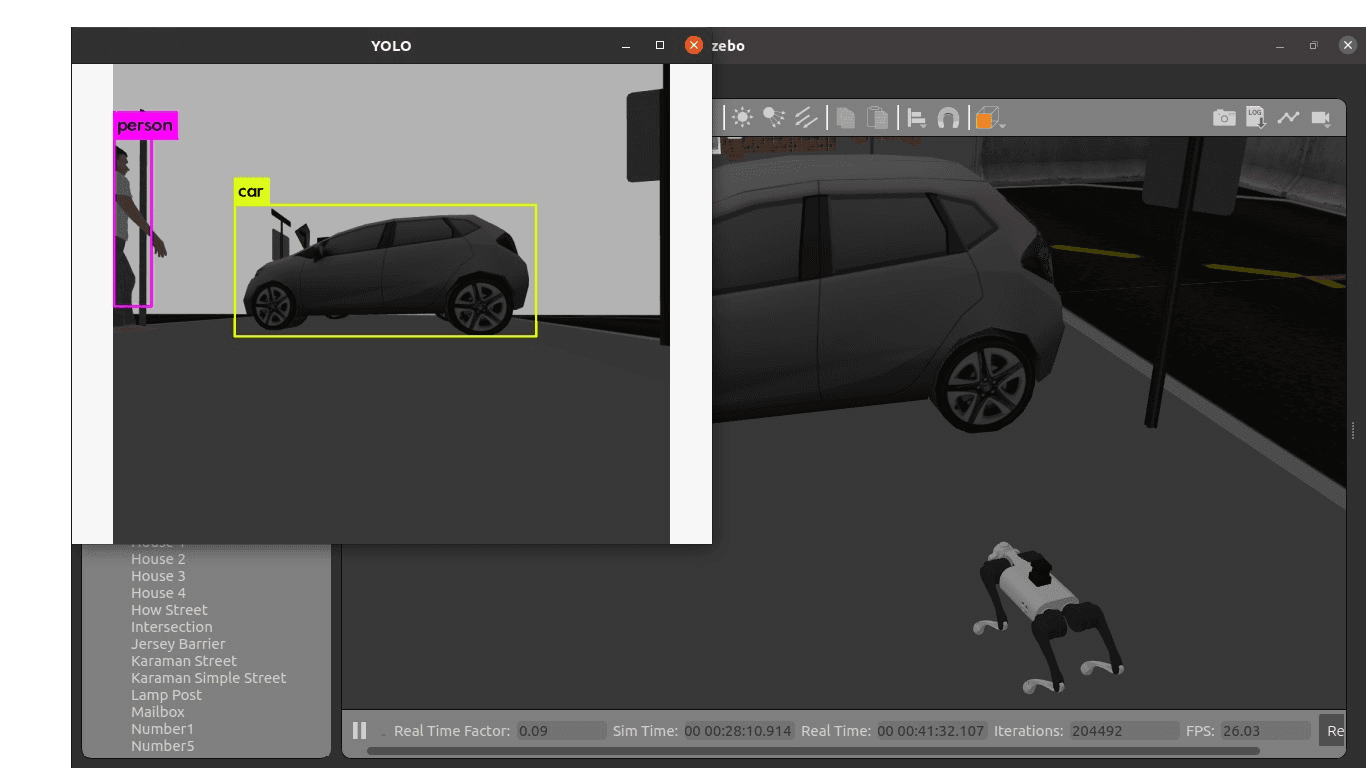

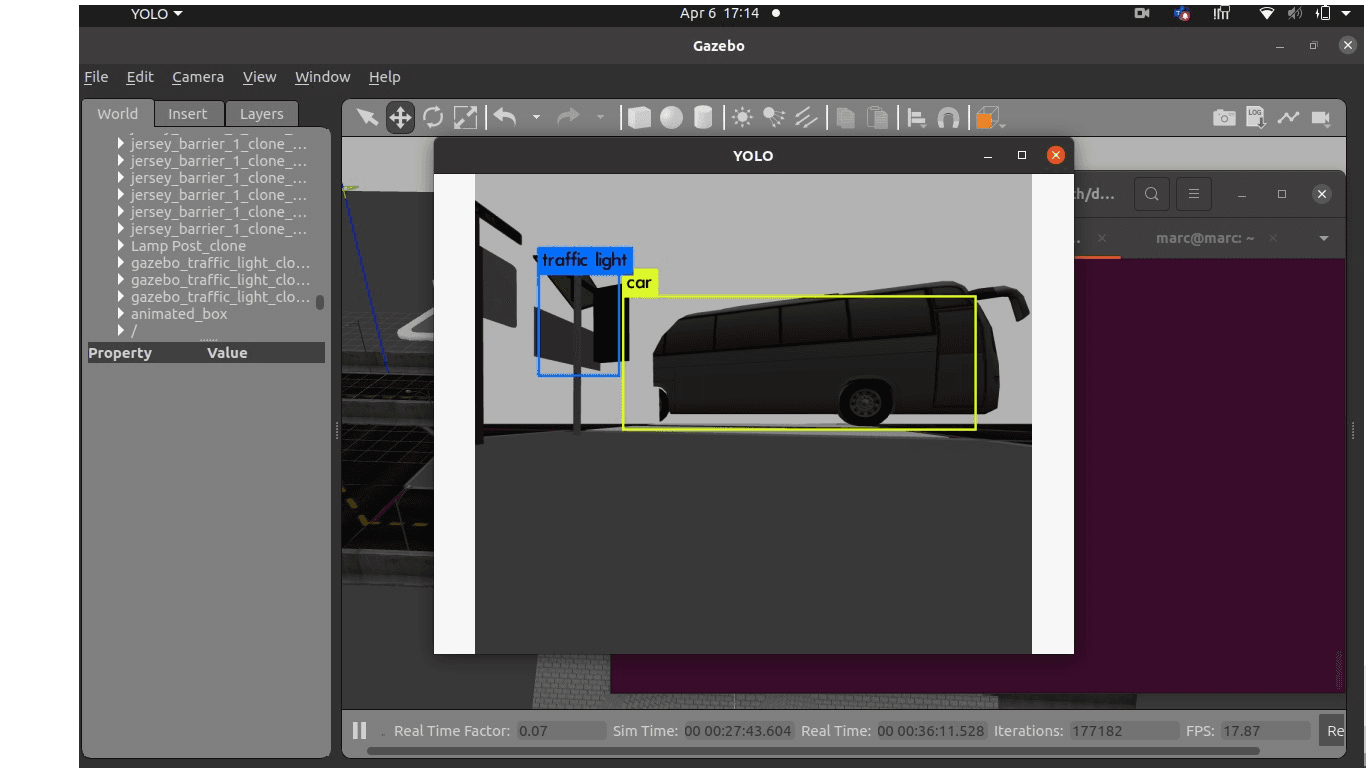

ROS-based quadruped guidance system using YOLO perception and audio feedback to support visually impaired users in navigation tasks.

Platform

Unitree Go1 + Gazebo

Perception

real-time YOLO pipeline

Output

synchronized audio cues

Built and integrated perception modules for a Unitree Go1 guide-dog concept. The system detects obstacles, estimates scene context, and produces actionable audio cues.

Assistive navigation requires dependable obstacle detection with low-latency, understandable feedback for end users.

Integrated YOLO-based object detection with ROS topics and an audio feedback layer to announce hazards and nearby objects.

Demonstrated reliable perception behavior in simulation and on physical robot runs, validating practical assistive robotics integration.